Documentation Index

Fetch the complete documentation index at: https://docs.oxen.ai/llms.txt

Use this file to discover all available pages before exploring further.

Quick Start

The Oxen.ai chat completions API is fully OpenAI-compatible. You can use the OpenAI SDK, curl, or any HTTP client that speaks the OpenAI chat format.

Base URL: https://hub.oxen.ai/api/ai

Endpoint: POST /ai/chat/completions

Browse all available models.

curl -X POST https://hub.oxen.ai/api/ai/chat/completions \

-H "Authorization: Bearer $OXEN_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "claude-sonnet-4-6",

"messages": [

{"role": "user", "content": "What is a great name for an ox?"}

]

}'

Authentication

Every request requires a Bearer token in the Authorization header. You can find your API key in your account settings.

Authorization: Bearer $OXEN_API_KEY

The API returns an OpenAI-compatible JSON response:

The API returns an OpenAI-compatible JSON response:

{

"id": "chatcmpl-af41f027-e4d5-4c4b-ac40-625fb4ebfb1e",

"object": "chat.completion",

"created": 1774040155,

"model": "claude-sonnet-4-6",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "How about \"Beauregard\"?"

},

"finish_reason": "stop"

}

],

"usage": {

"prompt_tokens": 11,

"completion_tokens": 4,

"total_tokens": 15

}

}

| Field | Description |

|---|

id | Unique identifier for the completion |

object | Always "chat.completion" |

created | Unix timestamp of when the completion was created |

model | The model that generated the response |

choices | Array of completion choices (typically one) |

choices[].message.content | The generated text |

choices[].finish_reason | Why generation stopped: "stop" (natural end) or "length" (hit max_tokens) |

usage | Token counts for the request |

Parameters

| Parameter | Type | Default | Description |

|---|

model | string | required | Model name, e.g. "claude-sonnet-4-6", "gpt-5-4-2026-03-05", "gemini-3-1-flash-lite-preview" |

messages | array | required | Array of message objects with role and content |

max_tokens | integer | model default | Maximum number of tokens to generate |

temperature | float | model default | Sampling temperature (0-2). Lower is more deterministic. |

stream | boolean | false | Enable streaming with server-sent events |

Messages

Each message in the messages array has a role and content:

| Role | Description |

|---|

system | Sets the behavior and context for the model |

user | The user’s input |

assistant | Previous model responses (for multi-turn conversations) |

{

"messages": [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "What is the capital of France?"},

{"role": "assistant", "content": "The capital of France is Paris."},

{"role": "user", "content": "What is its population?"}

]

}

Streaming

Set "stream": true to receive responses as server-sent events (SSE). Each event is a chat.completion.chunk object with a delta instead of a message.

curl -X POST https://hub.oxen.ai/api/ai/chat/completions \

-H "Authorization: Bearer $OXEN_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gemini-3-1-flash-lite-preview",

"messages": [

{"role": "user", "content": "Write a haiku about data."}

],

"stream": true

}'

data: and contains a JSON chunk:

data: {"id":"chatcmpl-...","object":"chat.completion.chunk","created":1774040190,"model":"gemini-3-1-flash-lite-preview","choices":[{"index":0,"delta":{"content":"hello"},"finish_reason":null}]}

Vision

Models that support vision (such as gemini-3-1-pro-preview or claude-sonnet-4-6) accept images in the messages array. For full details and examples including base64 encoding and video understanding, see Vision Language Models.

curl -X POST https://hub.oxen.ai/api/ai/chat/completions \

-H "Authorization: Bearer $OXEN_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gemini-3-1-pro-preview",

"messages": [

{

"role": "user",

"content": [

{"type": "text", "text": "What is in this image?"},

{"type": "image_url", "image_url": {"url": "https://oxen.ai/assets/images/homepage/hero-ox.png"}}

]

}

]

}'

tools array describing each function’s JSON Schema; the model may reply with tool_calls instead of plain text. You execute those functions in your app, then send the results back in new tool messages so the model can finish the answer.

| Concept | Description |

|---|

tools | Array of { "type": "function", "function": { "name", "description", "parameters" } } objects. parameters is a JSON Schema object for the arguments. |

tool_choice | Optional. "auto" (default) lets the model decide; "none" disables tools; or force a specific function with {"type": "function", "function": {"name": "..."}}. |

Assistant tool_calls | When finish_reason is "tool_calls", choices[0].message.tool_calls lists each call with id, function.name, and function.arguments (a JSON string). |

tool messages | Each result uses role: "tool", tool_call_id matching the call’s id, and content as a string (often JSON your tool returned). |

tool_calls instead of user-facing content:

curl -X POST https://hub.oxen.ai/api/ai/chat/completions \

-H "Authorization: Bearer $OXEN_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gpt-5-4-2026-03-05",

"messages": [

{"role": "user", "content": "What is the weather in Paris?"}

],

"tools": [

{

"type": "function",

"function": {

"name": "get_weather",

"description": "Get current weather for a city",

"parameters": {

"type": "object",

"properties": {

"city": {"type": "string", "description": "City name"}

},

"required": ["city"]

}

}

}

]

}'

{

"choices": [

{

"finish_reason": "tool_calls",

"index": 0,

"message": {

"content": null,

"role": "assistant",

"tool_calls": [

{

"function": {

"arguments": "{\"city\":\"Paris\"}",

"name": "get_weather"

},

"id": "call_GRNwPXnbuQW4Sa3QNB3FYkYw",

"index": 0,

"type": "function"

}

]

}

}

],

"created": 1774809792,

"id": "chatcmpl-1ce4aeac-6c34-468a-ba6b-b96c5372a1dc",

"model": "gpt-5-4-2026-03-05",

"object": "chat.completion",

"usage": {

"completion_tokens": 67,

"prompt_tokens": 572,

"total_tokens": 639

}

}

tool_calls, and one tool message per call. Replace IDs and tool_calls with values from the first response. Repeat until finish_reason is "stop" (or "length") and there are no new tool_calls.

Follow-up request: curl and OpenAI Python SDK

The follow-up HTTP body matches what the OpenAI SDK builds when you append assistant and tool messages in a loop.

curl -X POST https://hub.oxen.ai/api/ai/chat/completions \

-H "Authorization: Bearer $OXEN_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gpt-5-4-2026-03-05",

"messages": [

{

"role": "user",

"content": "What is the weather in Paris?"

},

{

"role": "assistant",

"content": null,

"tool_calls": [

{

"id": "call_01ABC",

"type": "function",

"function": {

"name": "get_weather",

"arguments": "{\"city\": \"Paris\"}"

}

}

]

},

{

"role": "tool",

"tool_call_id": "call_01ABC",

"content": "{\"temperature_c\": 18, \"conditions\": \"Partly cloudy\"}"

}

],

"tools": [

{

"type": "function",

"function": {

"name": "get_weather",

"description": "Get current weather for a city",

"parameters": {

"type": "object",

"properties": {

"city": {

"type": "string",

"description": "City name"

}

},

"required": ["city"]

}

}

}

]

}'

Errors

The API returns errors as JSON with an error object and a standard HTTP status code.

| Status | Meaning |

|---|

400 | Bad request (missing model, empty messages, invalid parameters) |

401 | Invalid or missing API key |

429 | Rate limit exceeded |

500 | Internal server error |

{

"error": {

"message": "You must specify a model to call"

}

}

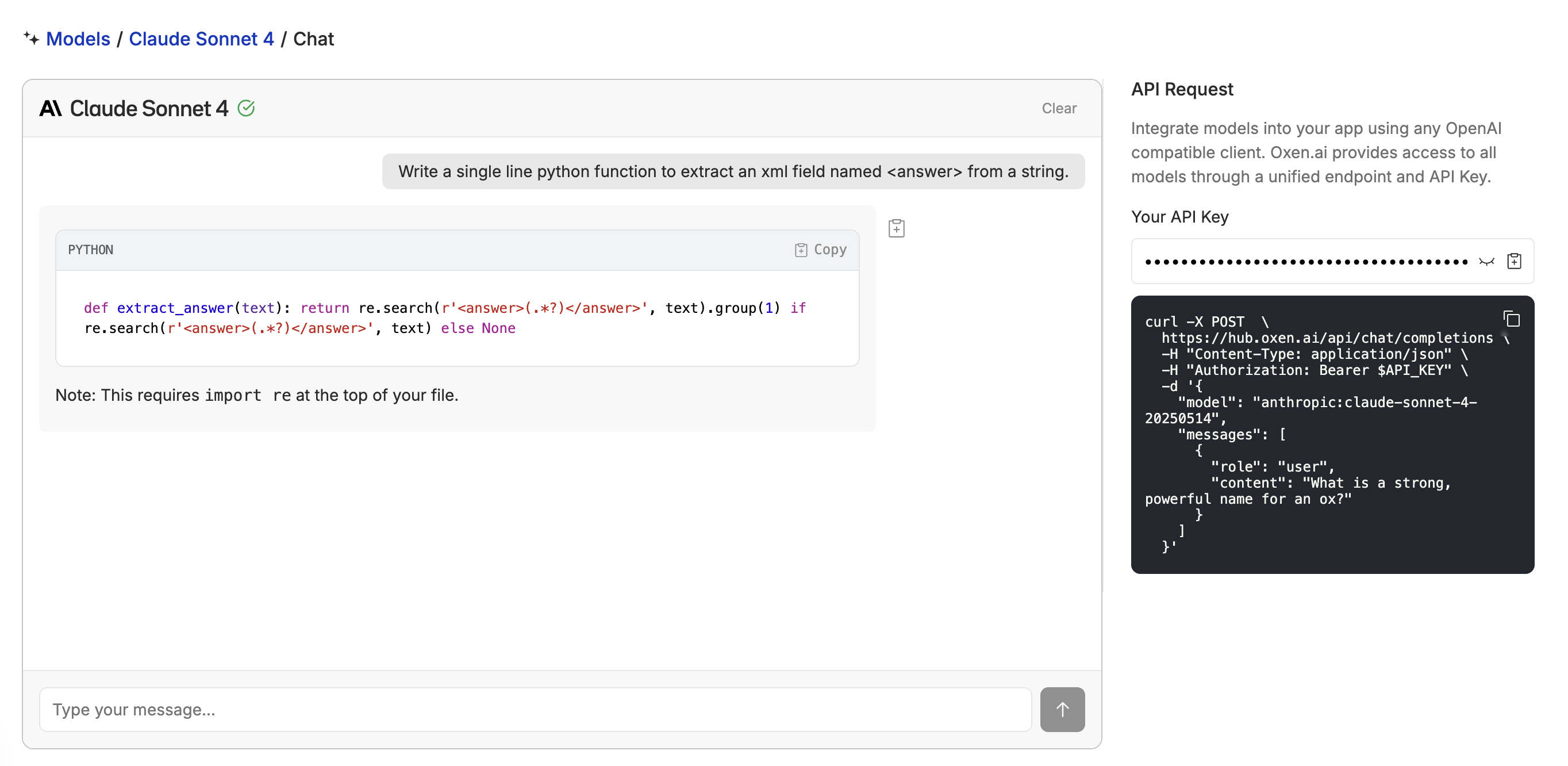

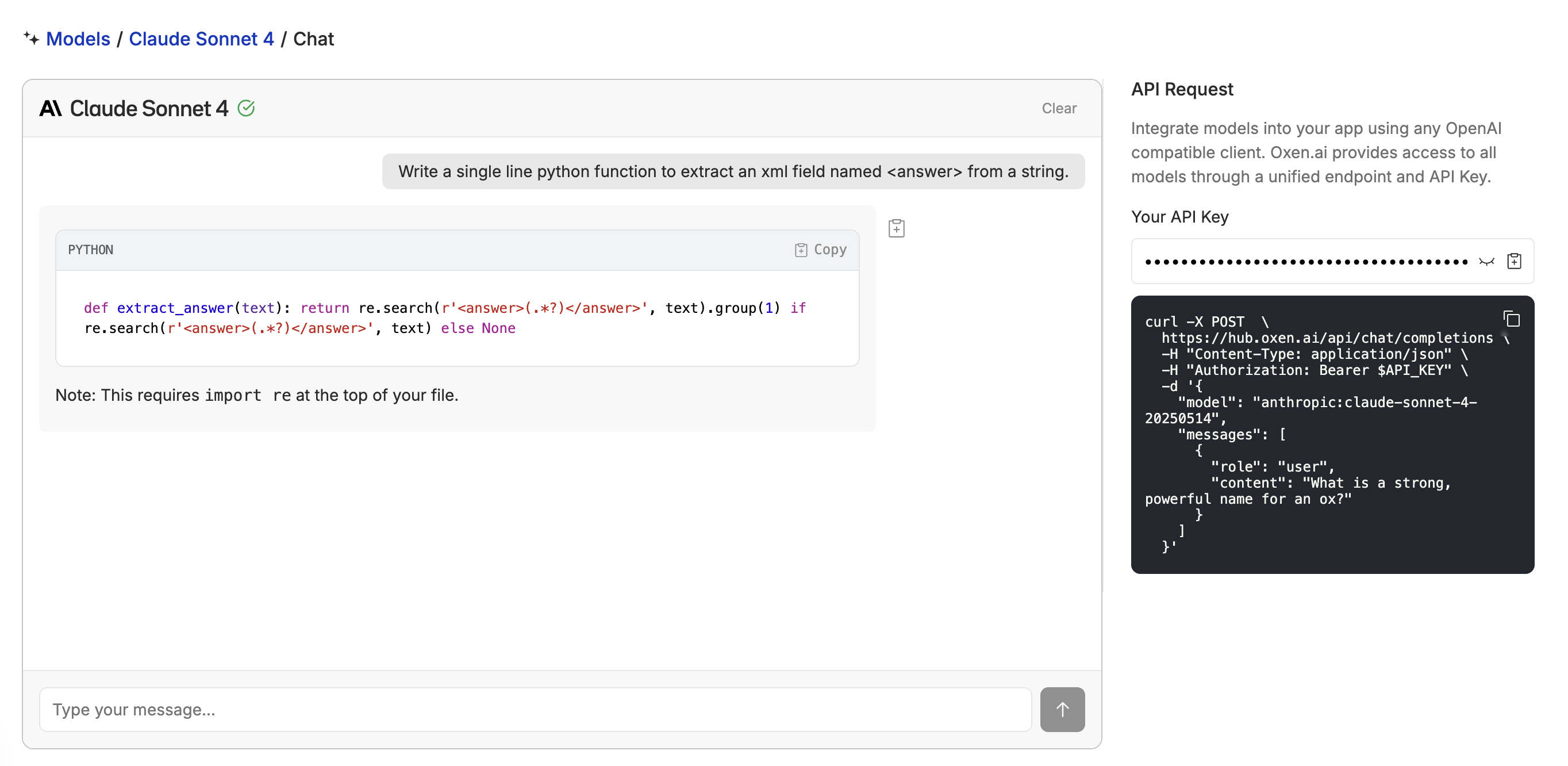

Playground

The model playground lets you test any model interactively before writing code. This is also a great way to test models you’ve fine-tuned after deploying them.